Agile Drone Flight

We work on perception, learning, planning, and control strategies to enable extremely agile maneuvers, which reach the limits of the actuators.

While pushing the boundaries of our quadrotors, we also enable them to recover from difficult conditions in case of a failure.

Read more

Drone Racing

Drone racing is a popular sport in which professional pilots fly small quadrotors through complex tracks at high speeds.

Developing a fully autonomous racing drone is difficult due to challenges that span dynamics modeling, onboard perception, localization and mapping, trajectory generation, and optimal control.

Read more

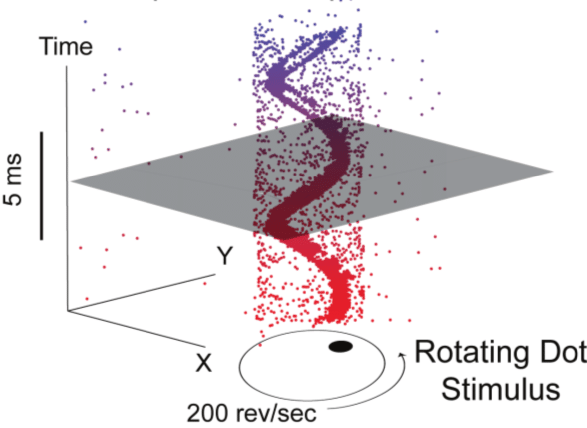

Event-based Vision

The Dynamic Vision Sensor (DVS) is a bio-inspired vision sensor that works like your eye.

Instead of wastefully sending entire images at fixed frame rates, only the local pixel-level changes caused by movement in a scene are transmitted at the time they occur.

The result is a stream of "address-events" at microsecond time resolution, equivalent to or better than conventional high-speed vision sensors running at thousands of frames per second.

Read more

Machine Learning

Deep learning is a branch of machine learning based on a set of algorithms that attempt to model high level abstractions in data.

In our research, we apply deep learning to solve different mobile robot navigation problems, such as depth estimation, end-to-end navigation, and classification.

Read more

Vision-based Navigation of Micro Aerial Vehicles (MAVs)

Our research goal is to develop teams of MAVs that can fly autonomously in city-like environments and that can be used to assist humans in tasks like rescue and monitoring.

We focus on enabling autonomous navigation using vision and IMU as sole sensor modalities (i.e., no GPS, no laser).

Read more

Active Vision and Exploration

One of the goals of our research is to enable our robots to perceive their environment by changing the viewpoint of their cameras such that they obtain more or better information from it.

We consider this problem of Active Vision to be of great importance to building systems that operate robustly in the real world.

Within this research area, we work on perception-aware path planning to help robots navigate by choosing where to look, as well as on efficiently reconstructing objects and scenes by choosing an optimal next-best-view for the camera.

Specifically, in exploration, the goal is for a robot to fully explore a previously unknown environment.

Read more

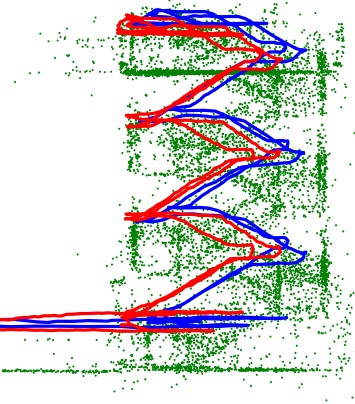

Visual-Inertial Odometry and SLAM

Metric 6 degree of freedom state estimation is possible with a single camera and an inertial measurement unit.

Using only a monocular vision sensor, the trajectory of the camera can be recovered, up to a scale factor, using visual odometry.

We investigate algorithms for visual and visual-inertial odometry, as well as methods to improve the performance of existing VO and VIO pipelines.

Read more

Multi-Robot Systems

Teams of robots can succeed in situations where a single robot may fail.

We investigate multi-robot systems composed of homogeneous or hetereogeneous robots, using both centralized and distributed communication.

These teams are applied to problems in search and rescue robotics, as well as mapping and navigation.

Read more

Monocular Dense Reconstruction

We are working on the problem of estimating dense and accurate 3D maps from a single moving camera.

The monocular setting is an appealing sensing modality for Micro Aerial Vehicles (MAVs), where strict limitations apply on payload and power consumption.

In this case, the high agility turns the platform into a formidable depth sensor, able to deal with a wide depth range and capable of achieving arbitrarily high confidence in the measurement.

Read more

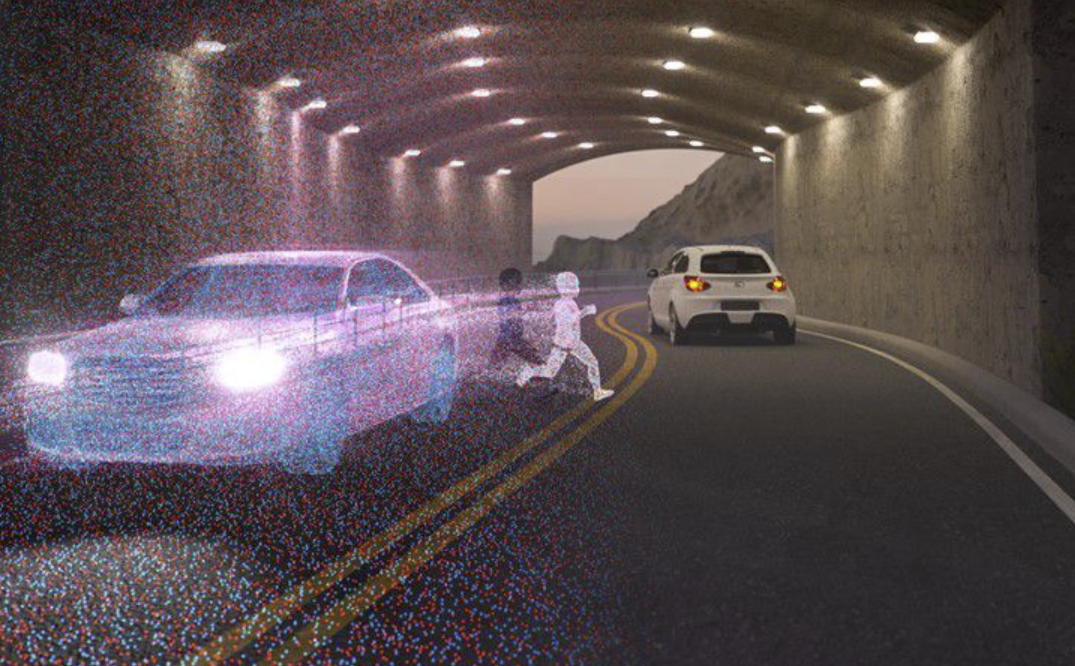

Autonomous Driving

Autonomous driving has emerged as a transformative technology, but its deployment is often limited to ideal conditions. Addressing challenges like low light and high dynamic range environments, our research

integrates advanced sensory systems and hybrid perception techniques to push the boundaries of perception and control. By combining event cameras with traditional frame-based sensors, we balance temporal

resolution, efficiency, and accuracy, reducing latency and computational load while maintaining high performance. This work enables safer and more reliable autonomous driving in complex, real-world scenarios.

Read more

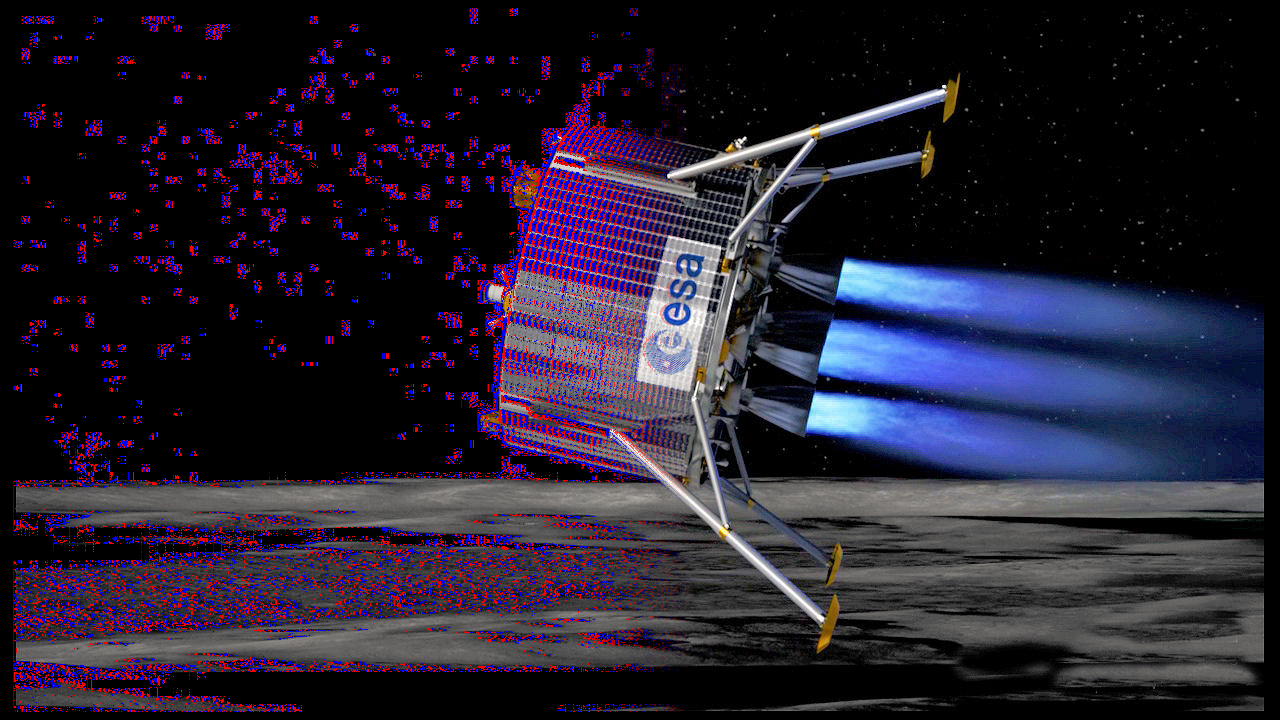

Space Robotics

Space robotics presents unique challenges, from navigating GPS-denied planetary terrains to operating in extreme lighting and topographical conditions. Our research focuses on advancing visual-inertial odometry

(VIO) and autonomous navigation systems tailored for extraterrestrial exploration. We explore efficient sensor fusion techniques to enhance robustness and accuracy in visual odometry, leveraging the strengths of

asynchronous event-based cameras and traditional imaging sensors. Our work also investigates decentralized state estimation and communication strategies for collaborative multi-robot systems, ensuring scalability

and resilience. These innovations enable precise navigation, efficient data usage, and reliable operation in the most challenging extraterrestrial environments.

Read more

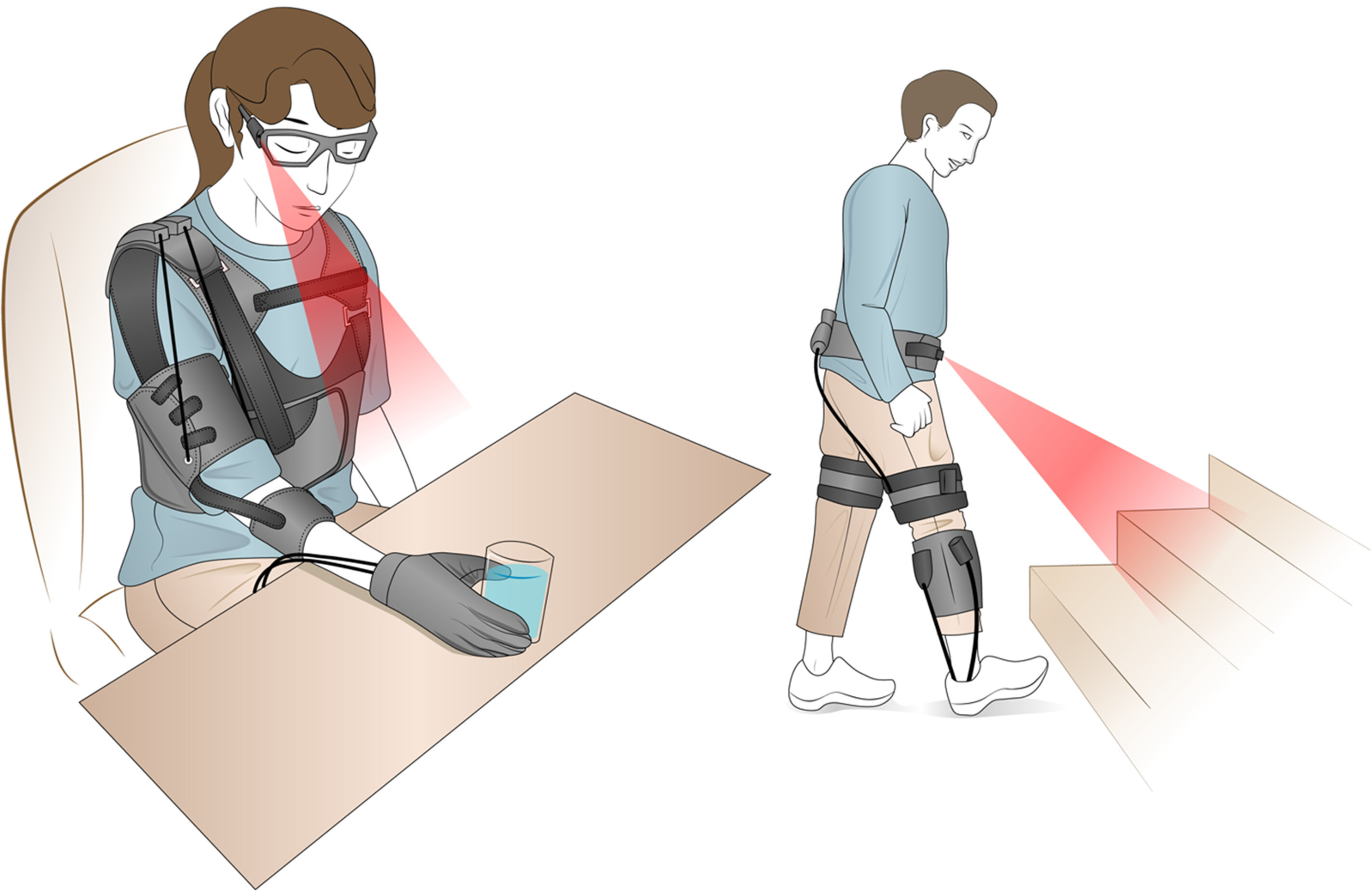

Medical Robotics

Medical robotics integrates advanced sensing, machine learning, and real-time visualization to enhance precision, efficiency, and accessibility in healthcare. Our research focuses on SLAM-based navigation for

visually impaired individuals, wearable robotic systems for assistive tasks, and augmented reality for improved accessibility. Our research aims to deliver adaptive, patient-centered robotic solutions for diverse

medical needs.

Read more