Schedule:

| 8:15 | Introduction to the workshop: Davide Scaramuzza |

| SESSION 1: Devices and Companies | |

| 8:30 | Tobi Delbruck, ETH Zurich / University of Zurich, lead inventor of the DVS/DAVIS sensors, The development of the DVS and DAVIS sensors, PDF, YouTube |

| 9:00 | Yoel Yaffe, Samsung Israel Research Center, Samsung Electronics, Dynamic Vision Sensor - The Road to Market, PDF, YouTube |

| 9:20 | Brian Taba, IBM Research, Object and Action Recognition on the Event-based IBM TrueNorth Processor, YouTube |

| 9:40 | Xavier Lagorce, Chronocam, The ATIS Sensor, PDF |

| 10:00 | Live Demos and Coffee break |

| SESSION 2: Algorithms | |

| 10:30 | Andrew Davison, Imperial College London, Reconstruction, Motion Estimation and SLAM from Events, PDF, YouTube |

| 11:00 | Kostas Daniilidis, University of Pennsylvania, Event-based Feature Tracking and Visual Inertial Odometry, PDF, YouTube |

| 11:30 | Davide Scaramuzza, University of Zurich, Event-based Algorithms for Robust and High-speed Robotics, PDF, YouTube |

| 12:00 | Live Demos and Lunch Break |

| SESSION 3: Bio-inspired and embedded vision | |

| 13:30 | Chiara Bartolozzi, Istituto Italiano di Tecnologia, Event-driven Sensing for a Humanoid Robot, PDF, YouTube |

| 14:00 | Jörg Conradt, Technical University of Munich, Miniaturized, Embedded Event-based Vision for High-speed Robots, PDF, YouTube |

| 14:30 | Garrick Orchard , National University of Singapore, Bio-Inspired Embedded Event-based Visual Processing, PDF, YouTube |

| 15:00 | Live Demos and Coffee break |

| SESSION 4: Companies | |

| 15:30 | Christian Brandli, CEO and co-founder of Insightness, From Event-based Visions to Real Systems, PDF, YouTube |

| 15:50 | Hanme Kim, co-founder of Slamcore, Event-based SLAM at Slamcore, PDF, YouTube |

| 16:10 | Sven-Erik Jacobsen, founder of iniVation, iniVation – Market-driven Technology Staircase for Event-based Vision, PDF |

| 16:30 | Panel discussion |

Live Demos

- Tobi Delbruck, ETH Zurich / University of Zurich, lead inventor of the DVS/DAVIS sensors,

Play with newest DAVIS prototype from INI and inilabs - Christian Brandli, CEO and founder of Insightness,

Insightness Drone Collision Avoidance Evaluation Kit - Yoel Yaffe, Samsung Israel Research Center, Samsung Electronics

Samsung; Experience Dynamic Vision Sensor (Gen2) on Mobile and PC platforms - Xavier Lagorce, Chronocam

Chronocam and the ATIS sensor - Shoushun Chen, Hillhouse Technology

CeleX: Revolutionary Smart Sensor for Smart Mobility - Jörg Conradt, Technical University of Munich

Small and light embedded event-based vision for mobile systems - Garrick Orchard and Xie Zhen, National University of Singapore

Event-based optical flow in FPGA - Alex Zhu, University of Pennsylvania

Event-based feature tracking with probabilistic feature association - Piotr Dudek (University of Manchester) and

Laurie Bose (University of Bristol)

Cellular Processor Array cameras - Henri Rebecq, University of Zurich

EVO: Event-based Visual Odometry - Timo Horstschaefer, University of Zurich

Real-time Visual-Inertial Odometry with an Event Camera - Chiara Bartolozzi, Istituto Italiano di Tecnologia

Fast Event-based Corner Detection - Guillermo Gallego, University of Zurich

Motion estimation by contrast maximization

Objectives:

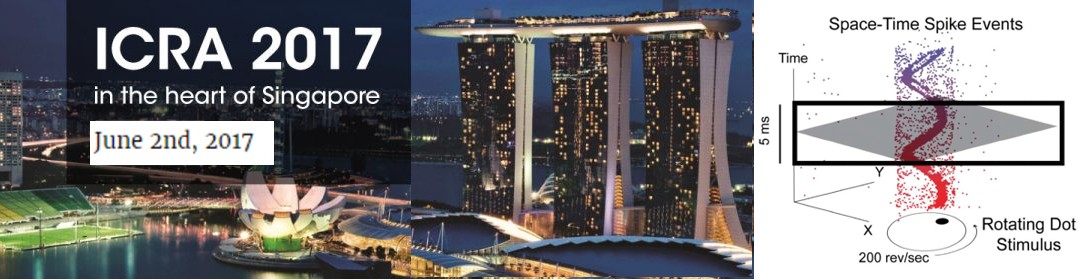

This workshop is dedicated to event-based vision sensors and algorithms. Event-based cameras are revolutionary vision sensors with three key advantages: a measurement rate that is almost 1 million times faster than standard cameras, a latency of microseconds, and a high dynamic range that is six orders of magnitude larger than that of standard cameras. Event-based sensors open frontiers which are unthinkable with standard cameras (which have been the main sensing technology of the past 50 years). These revolutionary sensors enable the design of a new class of algorithms to track a baseball in the moonlight, build a flying robot with the same agility of a fly, localizing and mapping in challenging lighting conditions and at remarkable speeds. These sensors became commercially available in 2008 and are slowly being adopted in mobile robotics. They covered the main news in 2016 with Intel and Bosch announcing a $15 million investment in event-camera company Chronocam and Samsung announcing its use with the IBM's brain-inspired TrueNorth processor to recognize human gestures. This workshop will cover the sensing hardware as well as the processing, learning, and control methods needed to take advantage of these sensors.

FAQs:

- What is an event camera? Watch this video explanation! More info here.

- What are possible applications of event cameras? A non-comprehensive list can be found here and here.

- Where can I buy an event camera? From IniLabs.

- Is there a event-camera dataset I can play with, so that I don't have to buy the sensor? Yes, here.

- Where can I find more information? Check out this List of Event-based Vision Resources.

Organizers:

- Davide Scaramuzza - University of Zurich

- Guillermo Gallego - University of Zurich

- Andrea Censi - ETH Zurich / nuTonomy

Previous related workshops:

- ICRA 2015 Workshop on Innovative Sensing for Robotics, with focus on Neuromorphic Sensors.

- Event-Based Vision for High-Speed Robotics (slides) IROS 2015, Workshop on Alternative Sensing for Robot Perception.

- The Telluride Neuromorphic Cognition Engineering Workshops.

- Capo Caccia Workshops toward Cognitive Neuromorphic Engineering.

This workshop was partially sponsored by nuTonomy and the National Comptence Research Center in Robotics, through the Swiss Science Foundation.