Elias Mueggler

|

MSc ETH Zurich Robotics and Perception Group Department of Informatics University of Zurich |

Email: mueggler (at) ifi (dot) uzh (dot) ch Office: Andreasstrasse 15, AND 2.16 |

I am a PhD student at the Robotics and Perception Group led by Prof. Davide Scaramuzza.

Currently, I am working on event-based vision for high-speed robotics and air-ground robot collaboration.

In 2010 and 2012, I received my Bachelor's and Master's degree in Mechanical Engineering from ETH Zurich, respectively.

During my studies at ETH, I was focusing on robotics, dynamics, and computer vision.

I wrote my Master thesis at MIT under the supervision of Prof. John J. Leonard on visual SLAM for space applications.

Link to my Google Scholar profile

Awards

- Qualcomm Innovation Fellowship 2016 (40'000 USD)

- Supervisor of Timo Horstschaefer, Winner of 2016 Fritz Kutter Award of ETH Zurich

- KUKA Innovation Award 2014 (20'000 EUR) [ Presentation at AUTOMATICA ]

- Convergent Science Network of Biomimetics and Neurotechnology CapoCaccia Fellowship 2014

- Supervisor of Basil Huber, Winner of 2014 Fritz Kutter Award of ETH Zurich

- Supervisor of Benjamin Keiser, Winner of the 2013 KUKA Best Student Project

Research Interests

Event-based Robot Vision

Please find more details about our research on event-based vision here.

References

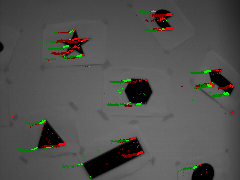

Fast Event-based Corner Detection

British Machine Vision Conference (BMVC), London, 2017.

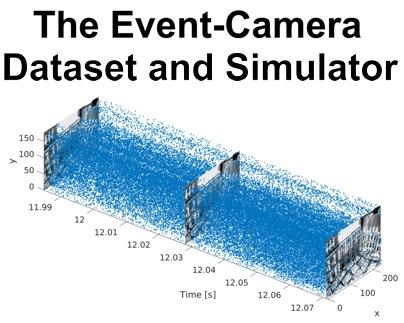

The Event-Camera Dataset and Simulator: Event-based Data for Pose Estimation, Visual Odometry, and SLAM

International Journal of Robotics Research, Vol. 36, Issue 2, pages 142-149, Feb. 2017.

Low-Latency Visual Odometry using Event-based Feature Tracks

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Daejeon, 2016.

Feature Detection and Tracking with the Dynamic and Active-pixel Vision Sensor (DAVIS)

International Conference on Event-Based Control, Communication and Signal Processing (EBCCSP), Krakow, 2016.

Towards Evasive Maneuvers with Quadrotors using Dynamic Vision Sensors

European Conference on Mobile Robots (ECMR), Lincoln, 2015.

Human vs. computer slot car racing using an event and frame-based DAVIS vision sensor

IEEE International Symposium on Circuits and Systems (ISCAS), Lisbon, 2015.

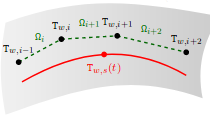

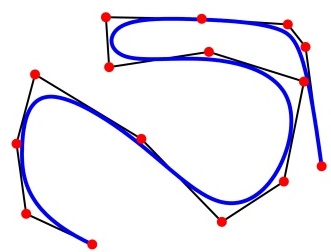

Continuous-Time Trajectory Estimation for Event-based Vision Sensors

Robotics: Science and Systems (RSS), Rome, 2015.

Lifetime Estimation of Events from Dynamic Vision Sensors

IEEE International Conference on Robotics and Automation (ICRA), Seattle, 2015.

Air-Ground Collaboration

We develop strategies for aerial and ground robots to work together as a team. By doing so, the robots can profit from each others capabilites. Our demonstration won the KUKA Innovation Award 2014 and was presented in a paper at the IEEE International Symposium on Safety, Security, and Rescue Robotics (SSRR) in 2014.

References

Aerial-guided Navigation of a Ground Robot among Movable Obstacles

IEEE International Symposium on Safety, Security, and Rescue Robotics (SSRR), Toyako-cho, 2014.

Supervised Students

- Timo Horstschaefer: Parallel Tracking, Depth Estimation, and Image Reconstruction with an Event Camera (Master Thesis 2016, Winner of the Fritz Kutter Award 2016)

- Jonathan Huber: Ground Robot Localization in Aerial 3D Maps (Semester Thesis 2016)

- Julia Nitsch: Terrain Classification in Search-and-Rescue Scenarios (NCCR Internship 2016)

- Mathis Kappeler: Exposure Control for Robust Visual Odometry (Master Project 2016)

- Imanol Studer: Head Pose Tracking with Quadrotors (Master Project 2015)

- Jon Lund: Towards SLAM for Dynamic Vision Sensors (Master Thesis 2015)

- Micha Brunner: Flying Motion Capture System (Semester Thesis 2015)

- Igor Bozic: High-Frequency Position Control of the KUKA youBot Arm (Master Project 2015)

- Joachim Ott: Vision-Based Surface Classification for Micro Aerial Vehicles (Semester Thesis 2015)

- David Tedaldi: Feature Tracking based on Frames and Events (Semester Thesis 2015) [ PDF ]

- Nathan Baumli: Towards Evasive Maneuvers for Quadrotors using Stereo Dynamic Vision (Master Thesis 2015) [ PDF ]

- Amos Zweig: Event-based Depth Estimation (Semester Thesis 2014)

- Nathan Baumli: Event-Based Full-Frame Visualization (Semester Thesis 2014) [ PDF ]

Previous Projects

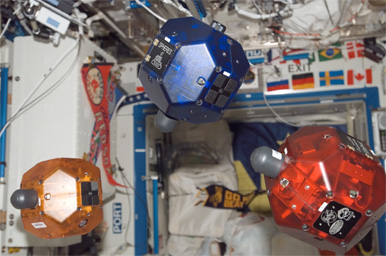

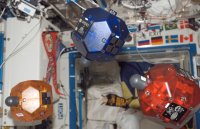

Visual Mapping of Unknown Space Targets for Relative Navigation and Inspection (Master Thesis)

The SPHERES VERTIGO goggles: vision based mapping and localization onboard the International Space Station

International Symposium on Artificial Intelligence, Robotics and Automation in Space (i-SAIRAS), Turin, 2012.