Event-aided Direct Sparse Odometry

Description

We introduce EDS, a direct monocular visual odometry using events and frames. Our algorithm leverages the event generation model to track the camera motion in the blind time between frames. The method formulates a direct probabilistic approach of observed brightness increments. Per-pixel brightness increments are predicted using a sparse number of selected 3D points and are compared to the events via the brightness increment error to estimate camera motion. The method recovers a semi-dense 3D map using photometric bundle adjustment. EDS is the first method to perform 6-DOF VO using events and frames with a direct approach. By design it overcomes the problem of changing appearance in indirect methods. We also show that, for a target error performance, EDS can work at lower frame rates than state-of-the-art frame-based VO solutions. This opens the door to low-power motion-tracking applications where frames are sparingly triggered "on demand'' and our method tracks the motion in between. We release code and datasets to the public.Citing

If you use this work in your research, please cite the following paper:

Event-aided Direct Sparse Odometry

IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2022.

Oral Presentation.

Code:

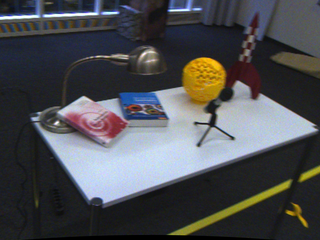

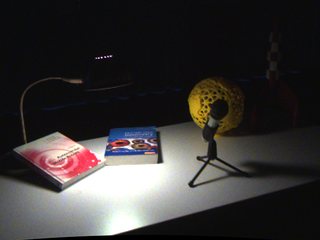

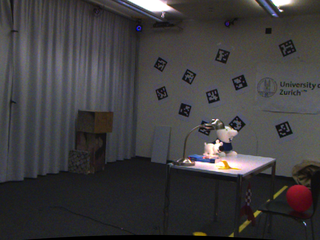

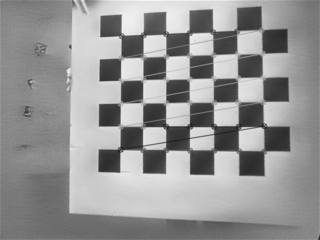

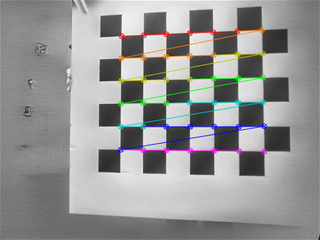

RPG Beamsplitter Design

Dataset: High Quality Events & RGB BeamSplitter Sequences for Visual Inertial Odometry

00 peanuts dark |

01 peanuts light |

02 rocket earth light |

03 rocket earth dark |

04 floor loop |

05 rpg building |

06 ziggy and fuzz |

07 ziggy and fuzz hdr |

08 peanuts running |

09 ziggy flying pieces |

10 office |

11 all characters |

12 floor eight loop |

13 airplane |

14 ziggy in the arena |

15 apartment day |

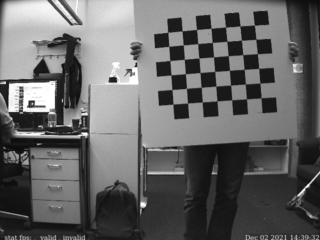

Calibration:

00 calib |

01 calib |

02 hand eye vrpn |

03 hand eye vicon |