Semi-Dense 3D Reconstruction with a Stereo Event Camera

Description

Event cameras are bio-inspired sensors that offer several advantages, such as low latency, high-speed and high dynamic range, to tackle challenging scenarios in computer vision. This paper presents a solution to the problem of 3D reconstruction from data captured by a stereo event-camera rig moving in a static scene, such as in the context of stereo Simultaneous Localization and Mapping. The proposed method consists of the optimization of an energy function designed to exploit small-baseline spatio-temporal consistency of events triggered across both stereo image planes. To improve the density of the reconstruction and to reduce the uncertainty of the estimation, a probabilistic depth-fusion strategy is also developed. The resulting method has no special requirements on either the motion of the stereo event-camera rig or on prior knowledge about the scene. Experiments demonstrate our method can deal with both texture-rich scenes as well as sparse scenes, outperforming state-of-the-art stereo methods based on event data image representations.

Poster

Here is the poster used for presentation at ECCV 2018.

Citing

Please cite the following paper if you use these datasets for your research:

Semi-Dense 3D Reconstruction with a Stereo Event Camera

European Conference on Computer Vision (ECCV), Munich, 2018.

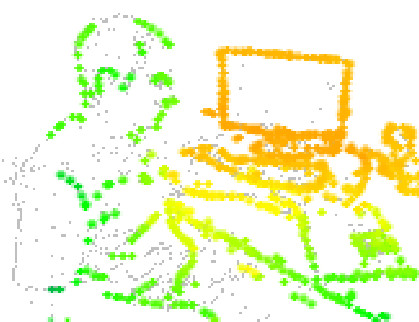

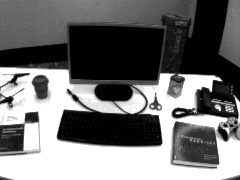

Stereo DAVIS dataset

The stereo event camera sequences used in our ECCV 2018 paper are available in the table below. The real-data sequences were recorded with two DAVIS240C sensors in stereo configuration, in a room with a motion capture system. The simulated sequence was generated using an event-based camera simulator.

We provide all event sequences as binary rosbag files for use with the Robot Operating System (ROS). The format is the one used by the RPG DVS ROS driver. The rosbag files contain events using dvs_msgs/EventArray messages. Ground truth poses are provided as geometry_msgs/PoseStamped message type.

Sequences

| Scene | Sequence name | Download |

|---|---|---|

|

bin | rosbag (432 MB) |

|

boxes | rosbag 1 (509 MB) rosbag 2 (950 MB) |

|

desk | rosbag 1 (525 MB) rosbag 2 (601 MB) |

|

monitor | rosbag 1 (105 MB) rosbag 2 (484 MB) |

|

reader | rosbag (706 MB) |

|

simulation_3planes | rosbag (65 MB). Calibration is in camera_info topic |

Calibration: Intrinsic and Extrinsic parameters

This zip file (2 MB) contains the information about the calibration of the stereo DAVIS camera.Calibration was performed using Kalibr on the grayscale frames acquired by the DAVIS camera and then it was used to undistort the events since both sensors (event-based and frame-based) share the same photoreceptor pixel array in the DAVIS camera.

We also provide a calibration rosbag (81 MB) (DAVIS frames) in case you want to calibrate it yourself.