The Zurich Urban Micro Aerial Vehicle Dataset

for Appearance-based Localization, Visual Odometry, and SLAM

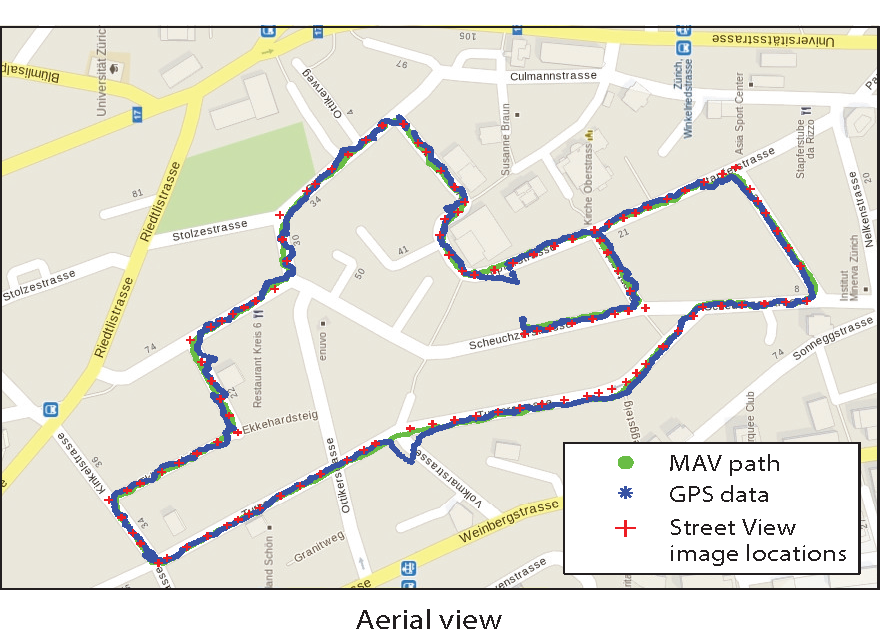

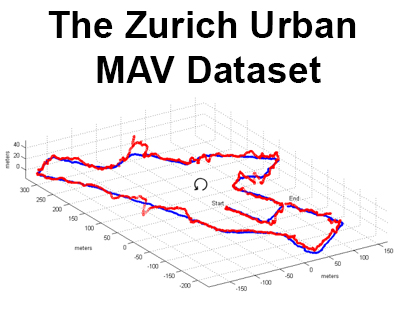

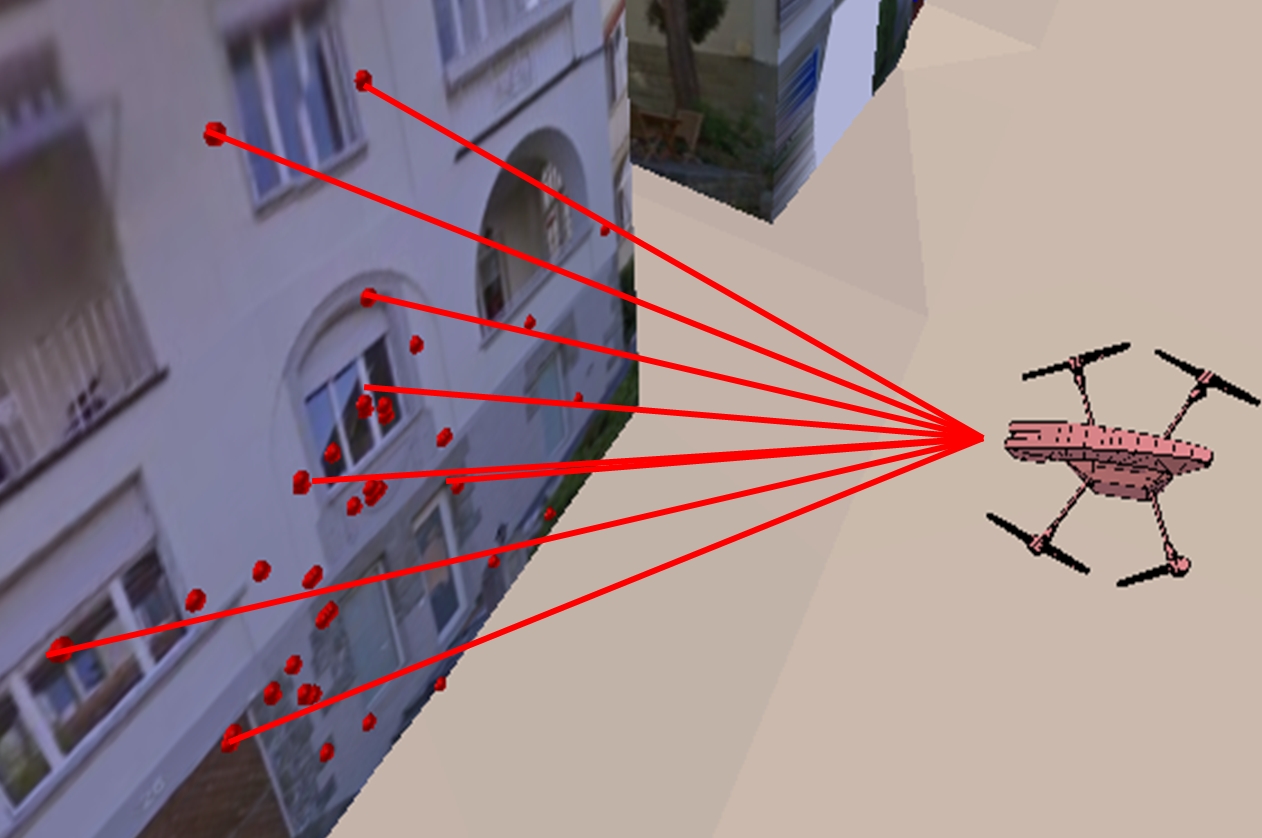

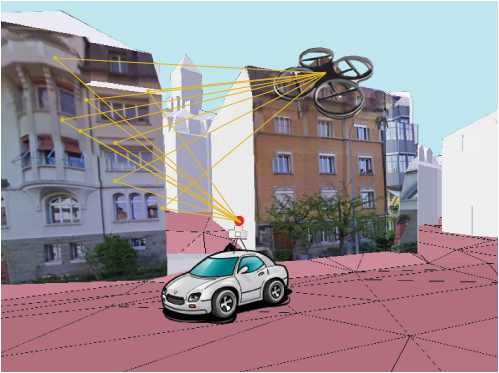

This presents the world's first dataset recorded on-board a camera equipped Micro Aerial Vehicle (MAV) flying within urban streets at low altitudes (i.e., 5-15 meters above the ground).

The 2 km dataset consists of time synchronized aerial high-resolution images, GPS and IMU sensor data, ground-level street view images, and ground truth data. The dataset is ideal to evaluate and benchmark appearance-based topological localization, monocular visual odometry, simultaneous localization and mapping (SLAM), and online 3D reconstruction algorithms for MAV in urban environments.

Links:

More on vision based navigation at our lab

Appearance-based GPS-denied Urban Localization of MAVs

Citing

When using the data in an academic context, please cite the following paper.

The Zurich Urban Micro Aerial Vehicle Dataset

International Journal of Robotics Research, April 2017

Visualization of the Dataset

Download

The entire dataset is roughly 28 gigabyte. We also provide a sample subset less than 200 megabyte, representing the first part of the dataset. The dataset is available at:

Sample dataset 200MB (zip)

Entire dataset 28GB (zip)

Dataset Format

The dataset contains time-synchronized high-resolution images (1920 x 1080 x 24 bits), GPS, IMU, and ground level Google-Street-View images. The high-resolution aerial images were captured with a rolling shutter GoPro Hero 4 camera that records each image frame line by line, from top to bottom with a readout time of 30 millisecond. A summary of the enclosed files is given below.

| Folder/File name | Description |

| ./Log Files/ | Folder containing on-board log files and ground truth data. |

| -- BarometricPressure.csv | Log data of the on-board barometric pressure sensor. |

| -- OnbordGPS.csv | Log data of the on-board GPS receiver. |

| -- OnboardPose.csv | Log of the on-board Pixhawk PX4 autopilot pose estimation. |

| -- RawAccel.csv | Log data of the on-board accelerometer. |

| -- RawGyro.csv | Log data of the on-board gyroscope. |

| -- GroundTruthAGL.csv | Ground truth MAV camera positions. |

| -- GroundTruthAGM.csv | Ground truth matches of image IDs between the aerial and ground level images. |

| -- StreetViewGPS.csv | GPS tags of the ground level Street View images. |

| ./MAV Images/ | Folder with 81'169 images recorded by the MAV in the city of Zurich, Switzerland. |

| ./MAV Images Calib/ | 30 images with calibration pattern to compute the intrinsic MAV camera parameters. |

| ./Street View Img/ | Folder with 113 Google Street View images covering the area of the data collection. |

| ./calibration_data.npz | Internal camera parameters computed using the images from ./MAV Images Calib/ |

| ./loadGroundTruthAGL.m | This script is used by plotPath.m to load the data into Matlab. |

| ./plotPath.m | Script to visualize the GPS and ground truth path in Matlab. |

| ./write_ros_bag.py | Script to write the data into a ROS bag file. |

| ./readme.txt | More detailed descriptions about the files listed above. |

Text Files

The data from the on-board barometric pressure sensor BarometricPressure.csv, accelerometer RawAccel.csv, gyroscope RawGyro.csv, GPS receiver OnbordGPS.csv, and pose estimation OnboardPose.csv is logged and timesynchronized using the clock of the PX4 autopilot board. The on-board sensor data was spatially and temporally aligned with the aerial images. The first column of every file contains the timestamp when the data was recorded expressed in microseconds. In the next columns the sensor readings are stored. The second column in OnbordGPS.csv encodes the identification number (ID) of every aerial image stored in the /MAV Images/ folder. The first column in GroundTruthAGL.csv is the ID of the aerial image, followed by the ground truth camera position of the MAV and the raw GPS data. The second column in GroundTruthAGM.csv is the ID of of the aerial image, followed by the ID of the first, second and third best match ground-level street view image in the /Street View Img/ folder.

Parsing and indexing

Beside the raw data measured during the flight, we provide a script to visualize the ground truth path of the Fotokite in comparison with the recorded GPS location data in MATLAB. Also, we provide a script to parse and write all the data into a rosbag file in order to be easily viewed and replayed using the Robot Operating System (ROS) ecosystem. The logged numerical data is saved in human readable tables, the high quality images have jpeg format.

Ground truth

Two types of ground truth data are provided in order to evaluate and benchmark different vision-based localization algorithms. Firstly, appearance-based topological localization algorithms, that match aerial images to street level ones, can be evaluated in terms of precision rate and recall rate. Secondly, metric localization algorithms, that computed the ego-motion of the MAV using monocular visual SLAM tools, can be evaluated in terms of standard deviations from the ground truth path of the vehicle.

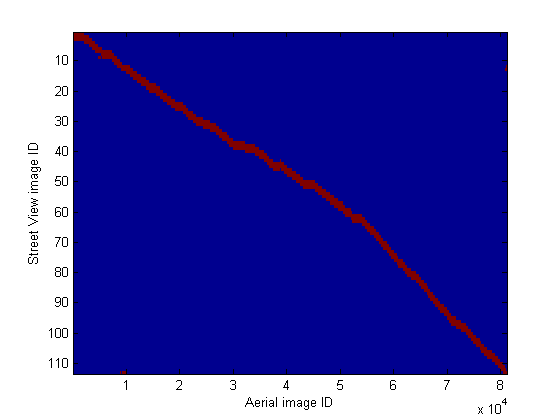

Evaluation of topological localization algorithms

In order to establish the ground truth confusion map, that shows the correct matches between the MAV images and the Street View images, we computed the three closest geometric distances between the aerial and ground level images using the enclosed GPS tags. The ground truth matches were then verified visually. Thus, the performance of different air-ground image-matching based localization algorithms can be evaluated and compared in terms of precision and recall.

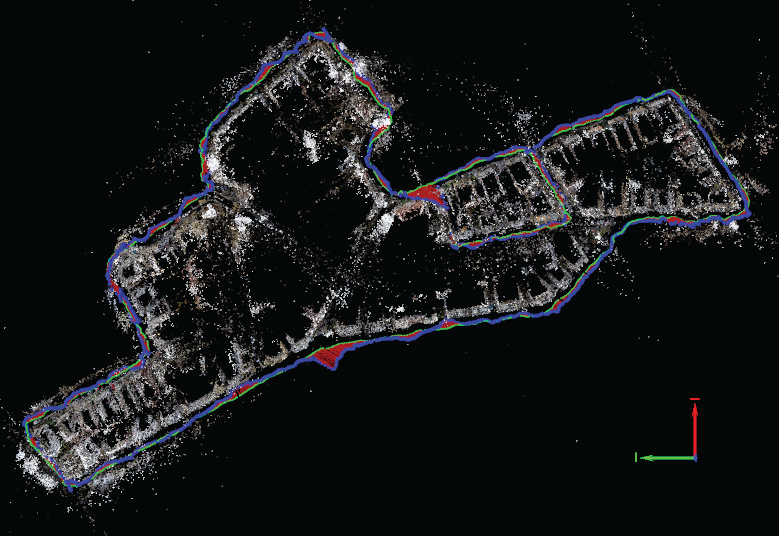

Evaluation of metric localization algorithms

In order to compute the actual metric path of the MAV we performed an accurate photogrammetric 3D reconstruction using the Pix4D software. To obtain the best result and to reduce the accumulated error in consecutive measurements we recorded the data in such a manner to include loop-closure situations after long flight paths. To perform the reconstruction we sub-sampled the data at 1 fps. The GPS position was used as initial position of the images. Next, a total of 5'237'298 2D keypoint observations and 1'382'274 3D points were used for the block bundle adjustment in order to iteratively refine the camera positions.

References

The work listed below inspired the recording of this dataset. In these papers a much smaller dataset was used, that did not contain time synchronized GPS (except a small street segment ), IMU data and accurate metric ground truth.If you used this dataset, please send your paper to majdik (at) ifi (dot) uzh (dot) ch.

Air-ground Matching: Appearance-based GPS-denied Urban Localization of Micro Aerial Vehicles

Journal of Field Robotics, 2015.

Micro Air Vehicle Localization and Position Tracking from Textured 3D Cadastral Models

IEEE International Conference on Robotics and Automation (ICRA), Hong Kong, 2014.

MAV Urban Localization from Google Street View Data

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Tokyo, 2013.

License

This dataset is released with no restriction. It can be used for research, evaluation, and commercial purposes.Acknowledgements

This dataset was recorded with the help of Karl Schwabe, Mathieu Noirot-Cosson, and Yves Albers-Schoenberg. To record the dataset we used a Fotokite MAV offered to our disposal by Perspective Robotics AG�http://fotokite.com.This work was supported by the National Centre of Competence in Research Robotics (NCCR) through the Swiss National Science Foundation and by the Hungarian Scientific Research Fund (No. OTKA/NKFIH 120499).