Active Vision and Exploration

Active vision is concerned with obtaining more information from the environment by actively choosing where and how to observe it using a camera.

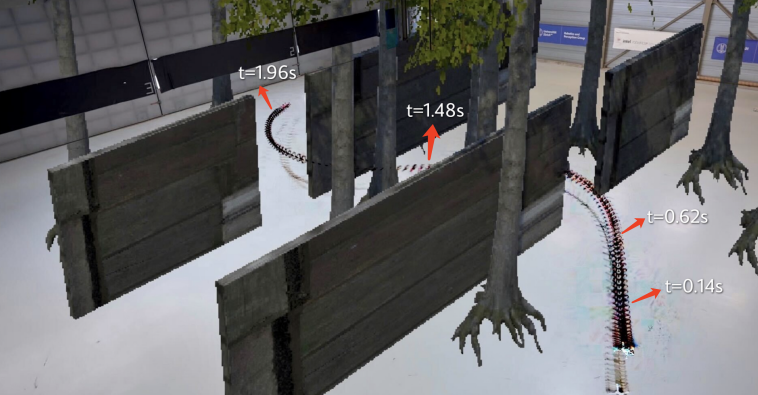

PA-MPPI: Perception-Aware Model Predictive Path Integral Control for Quadrotor Navigation in Unknown Environments

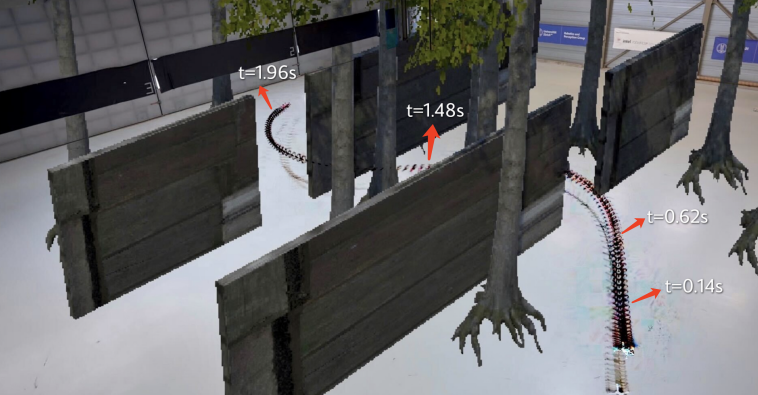

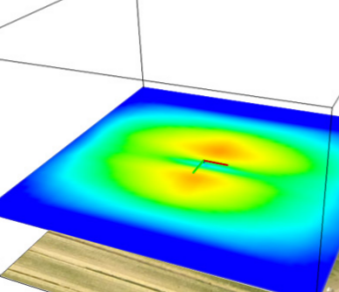

Quadrotor navigation in unknown environments is critical for practical missions such as search-and-rescue. Solving this problem requires addressing three key challenges: path planning in non-convex free space due to obstacles, satisfying quadrotor-specific dynamics and objectives, and exploring unknown regions to expand the map. Recently, the Model Predictive Path Integral (MPPI) method has emerged as a promising solution to the first two challenges. By leveraging sampling-based optimization, it can effectively handle non-convex free space while directly optimizing over the full quadrotor dynamics, enabling the inclusion of quadrotor-specific costs such as energy consumption. However, MPPI has been limited to tracking control that optimizes trajectories only within a small neighborhood around a reference trajectory, as it lacks the ability to explore unknown regions and plan alternative paths when blocked by large obstacles. To address this limitation, we introduce Perception-Aware MPPI (PA-MPPI). In this approach, perception-awareness is characterized by planning and adapting the trajectory online based on perception objectives. Specifically, when the goal is occluded, PA-MPPI incorporates a perception cost that biases trajectories toward those that can observe unknown regions. This expands the mapped traversable space and increases the likelihood of finding alternative paths to the goal. Through hardware experiments, we demonstrate that PA-MPPI, running at 50 Hz, performs on par with the state-of-the-art quadrotor navigation planner for unknown environments in challenging test scenarios. Furthermore, we show that PA-MPPI can serve as a safe and robust action policy for navigation foundation models, which often provide goal poses that are not directly reachable.

References

Y. Zhai, R. Reiter, D. Scaramuzza

PA-MPPI: Perception-Aware Model Predictive Path Integral Control for Quadrotor Navigation in Unknown Environments

Robotics and Automation Letters (RA-L), 2026

PDF

What Matters in RL-Based Methods for Object-Goal Navigation? An Empirical Study and A Unified Framework

Object-Goal Navigation (ObjectNav) is a critical component toward deploying mobile robots in everyday, uncontrolled environments such as homes, schools, and workplaces. In this context, a robot must locate target objects in previously unseen environments using only its onboard perception. Success requires the integration of semantic understanding, spatial reasoning, and long-horizon planning, which is a combination that remains extremely challenging. While reinforcement learning (RL) has become the dominant paradigm, progress has spanned a wide range of design choices, yet the field still lacks a unifying analysis to determine which components truly drive performance. In this work, we conduct a large-scale empirical study of modular RL-based ObjectNav systems, decomposing them into three key components: perception, policy, and test-time enhancement. Through extensive controlled experiments, we isolate the contribution of each and uncover clear trends: perception quality and test-time strategies are decisive drivers of performance, whereas policy improvements with current methods yield only marginal gains. Building on these insights, we propose practical design guidelines and demonstrate an enhanced modular system that surpasses State-of-the-Art (SotA) methods by 6.6% on SPL and by a 2.7% success rate. We also introduce a human baseline under identical conditions, where experts achieve an average 98% success, underscoring the gap between RL agents and human-level navigation. Our study not only sets the SotA performance but also provides principled guidance for future ObjectNav development and evaluation.

References

H. Wang, B. Sun, J. Xing, F. Yang, M. Hutter, D. Shah, D. Scaramuzza, M. Pollefeys

What Matters in RL-Based Methods for Object-Goal Navigation? An Empirical Study and A Unified Framework

Arxiv 2025

PDF

Sight Over Site: Perception-Aware Reinforcement Learning for Efficient Robotic Inspection

Quadrotor navigation in unknown environments is critical for practical missions such as search and rescue. Solving it requires addressing three key challenges: the non-convexity of free space due to obstacles, quadrotor-specific dynamics and objectives, and the need for exploration of unknown regions to find a path to the goal. Recently, the Model Predictive Path Integral (MPPI) method has emerged as a promising solution that solves the first two challenges. By leveraging sampling-based optimization, it can effectively handle non-convex free space while directly optimizing over the full quadrotor dynamics, enabling the inclusion of quadrotor-specific costs such as energy consumption. However, its performance in unknown environments is limited, as it lacks the ability to explore unknown regions when blocked by large obstacles. To solve this issue, we introduce Perception-Aware MPPI (PA-MPPI). Here, perception awareness is defined as adapting the trajectory online based on perception objectives. Specifically, when the goal is occluded, PA-MPPI’s perception cost biases trajectories that can perceive unknown regions. This expands the mapped traversable space and increases the likelihood of finding alternative paths to the goal. Through hardware experiments, we demonstrate that PA-MPPI, running at 50 Hz with our efficient perception and mapping module, performs up to 100% better than the baseline in challenging settings where the state-of-the-art MPPI fails. In addition, we demonstrate that PA-MPPI can be used as a safe and robust action policy for navigation foundation models, which often provide goal poses that are not directly reachable.

References

R.Kuhlmann*, J. Wolfram*, B. Sun, J. Xing, D. Scaramuzza, M. Pollefeys, C. Cadena

Sight Over Site: Perception-Aware Reinforcement Learning for Efficient Robotic Inspection

Arxiv 2025

PDF

ForesightNav: Learning Scene Imagination for Efficient Exploration

Understanding how humans leverage prior knowledge to navigate unseen environments while making exploratory decisions is essential for developing autonomous robots with similar abilities. In this work, we propose ForesightNav, a novel exploration strategy inspired by human imagination and reasoning. Our approach equips robotic agents with the capability to predict contextual information, such as occupancy and semantic details, for unexplored regions. These predictions enable the robot to efficiently select meaningful long-term navigation goals, significantly enhancing exploration in unseen environments.We validate our imagination-based approach using the Structured3D dataset, demonstrating accurate occupancy prediction and superior performance in anticipating unseen scene geometry. Our experiments show that the imagination module improves exploration efficiency in unseen environments, achieving a 100% completion rate for PointNav and an SPL of 67% for ObjectNav on the Structured3D Validation split. These contributions demonstrate the power of imagination-driven reasoning for autonomous systems to enhance generalizable and efficient exploration.

References

H. Shah, J. Xing, N. Messikommer, B. Sun, M. Pollefeys, D. Scaramuzza

ForesightNav: Learning Scene Imagination for Efficient Exploration

IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Nashville, 2025

PDF

Code

AERIAL-CORE: AI-Powered Aerial Robots for Inspection and Maintenance of Electrical Power Infrastructures

Large-scale infrastructures are prone to deterioration due to age, environmental influences, and heavy usage. Ensuring their safety through regular inspections and maintenance is crucial to prevent incidents that can significantly affect public safety and the environment. This is especially pertinent in the context of electrical power networks, which, while essential for energy provision, can also be sources of forest fires. Intelligent drones have the potential to revolutionize inspection and maintenance, eliminating the risks for human operators, increasing productivity, reducing inspection time, and improving data collection quality. However, most of the current methods and technologies in aerial robotics have been trialed primarily in indoor testbeds or outdoor settings under strictly controlled conditions, always within the line of sight of human operators. Additionally, these methods and technologies have typically been evaluated in isolation, lacking comprehensive integration. This paper introduces the first autonomous system that combines various innovative aerial robots. This system is designed for extended-range inspections beyond the visual line of sight, features aerial manipulators for maintenance tasks, and includes support mechanisms for human operators working at elevated heights. The paper further discusses the successful validation of this system on numerous electrical power lines, with aerial robots executing flights over 10 kilometers away from their ground control stations.

References

A. Ollero, A. Suarez, C. Papaioannidis, I. Pitas, J.M. Marredo, V. Duong, E. Ebeid, V. Kratky, M. Saska, C. Hanoune, A. Afifi, A. Franchi, C. Vourtsis, D. Floreano, G. Vasiljevic, S. Bogdan, A. Caballero, F. Ruggiero, V. Lippiello, C. Matilla, G. Cioffi, D. Scaramuzza, J.R. Martinez-de Dios, B.C. Arrue, C. Martin, K. Zurad, C. Gaitan, J. Rodriguez, A. Munoz, A. Viguria

AERIAL-CORE: AI-Powered Aerial Robots for Inspection and Maintenance of Electrical Power Infrastructures

Arxiv, 2024.

PDF

Video

Autonomous Power Line Inspection with Drones via Perception-Aware MPC

Drones have the potential to revolutionize power line inspection by increasing productivity, reducing inspection time, improving data quality, and eliminating the risks for human operators. Current state-of-the-art systems for power line inspection have two shortcomings: (i) control is decoupled from perception and needs accurate information about the location of the power lines and masts; (ii) collision avoidance is decoupled from the power line tracking, which results in poor tracking in the vicinity of the power masts, and, consequently, in decreased data quality for visual inspection. In this work, we propose a model predictive controller (MPC) that overcomes these limitations by tightly coupling perception and action. Our controller generates commands that maximize the visibility of the power lines while, at the same time, safely avoiding the power masts. For power line detection, we propose a lightweight learning-based detector that is trained only on synthetic data and is able to transfer zero-shot to real-world power line images. We validate our system in simulation and real-world experiments on a mock-up power line infrastructure.

References

J.Xing*, G. Cioffi*, J. Hidalgo-Carrió, D. Scaramuzza

Autonomous Power Line Inspection with Drones via Perception-Aware MPC

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2023.

Best Paper Award!

PDF

YouTube

Code

Learning Perception-Aware Agile Flight in Cluttered Environments

Recently, neural control policies have outperformed existing model-based planning-and-control methods for autonomously

navigating quadrotors through cluttered environments in minimum time. However, they are not perception aware, a crucial

requirement in vision-based navigation due to the camera's limited field of view and the underactuated nature of a quadrotor.

We propose a method to learn neural network policies that achieve perception-aware, minimum-time flight in cluttered environments.

Our method combines imitation learning and reinforcement learning (RL) by leveraging a privileged learning-by-cheating framework.

Using RL, we first train a perception-aware teacher policy with full-state information to fly in minimum time through cluttered environments.

Then, we use imitation learning to distill its knowledge into a vision-based student policy that only perceives the environment via a camera.

Our approach tightly couples perception and control, showing a significant advantage in computation speed (10x faster) and success rate.

We demonstrate the closed-loop control performance using a physical quadrotor and hardware-in-the-loop simulation at speeds up to 50 km/h.

References

Yunlong Song*, Kexin Shi*, Robert Penicka, Davide Scaramuzza

Learning Perception-Aware Agile Flight in Cluttered Environments

IEEE International Conference on Robotics and Automation (ICRA), 2023.

PDF

YouTube

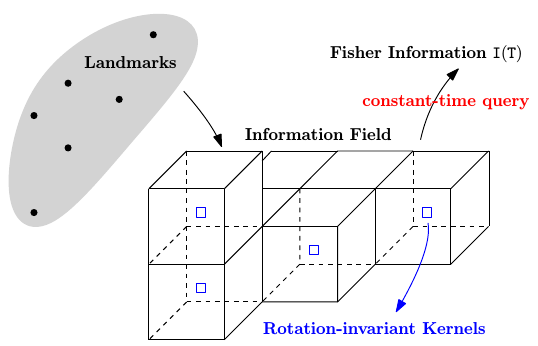

Fisher Information Field: an Efficient and Differentiable Map for Perception-aware Planning

Considering visual localization accuracy at the planning time gives preference to robot

motion that can be better localized and is of benefit to vision-based navigation. To

integrate the knowledge about localization accuracy in planning, a common approach is to

compute the Fisher information of the pose estimation process from a set of sparse

landmarks. However, this approach scales linearly with the number of landmarks and

introduces redundant computation. To overcome these drawbacks, we propose the first

dedicated map for evaluating the Fisher information of 6 degree-of-freedom visual

localization for perception-aware planning. We separate and precompute the rotational

invariant component from the Fisher information and store it in a voxel grid, namely

the Fisher information field. The Fisher information for arbitrary poses can then be

computed from the field in constant time. Experimental results show that the proposed

Fisher information field can be applied to different planning algorithms and is at least 10

times faster than using the point cloud. Moreover, the proposed map is differentiable,

resulting in better performance in trajectory optimization algorithms.

References

Z. Zhang, D. Scaramuzza

Fisher Information Field: an Efficient and Differentiable Map for Perception-aware Planning

arXiv preprint, 2020.

PDF

Video

Code

Z. Zhang, D. Scaramuzza

Beyond Point Clouds: Fisher Information Field for Active Visual Localization

IEEE International Conference on Robotics and Automation, 2019.

PDF

Video

Code

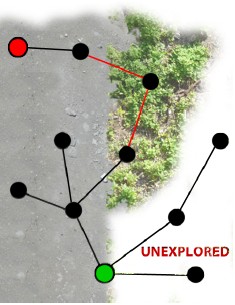

Exploration Without Global Consistency

Most existing exploration approaches either assume a perfect state estimate, or, with a heavy computational burden, establish a globally consistent map representation through map optimization. In this research, we investigate whether the task of exploration (fully cover a previously unknown volume) can be solved without either of these two requirements. Our approach is to propose a novel representation which allows to resolve whether there is space left to explore in a globally topological and locally metric manner, exploiting place recognition.

References

T. Cieslewski, A. Ziegler, D. Scaramuzza

Exploration Without Global Consistency Using Local Volume Consolidation

IFRR International Symposium on Robotics Research (ISRR), Hanoi, 2019.

PDF

YouTube

Fisher Information Field for Active Visual Localization

For mobile robots to localize robustly, actively

considering the perception requirement at the planning stage is

essential. In this paper, we propose a novel representation for

active visual localization. By formulating the Fisher information

and sensor visibility carefully, we are able to summarize the

localization information into a discrete grid, namely the Fisher

information field. The information for arbitrary poses can then

be computed from the field in constant time, without the need

of costly iterating all the 3D landmarks. Experimental results

on simulated and real-world data show the great potential

of our method in efficient active localization and perception-

aware planning. To benefit related research, we release our

implementation of the information field to the public.

References

Z. Zhang, D. Scaramuzza

Beyond Point Clouds: Fisher Information Field for Active Visual Localization

IEEE International Conference on Robotics and Automation, 2019.

PDF

Video

Code

PAMPC: Perception-Aware Model Predictive Control

We present a perception-aware model predictive control framework for quadrotors that unifies control and planning with respect to action and perception objectives. Our framework leverages numerical optimization to compute trajectories that satisfy the system dynamics and require control inputs within the limits of the platform.

Simultaneously, it optimizes perception objectives for robust and reliable sensing by maximizing the visibility of a point of interest and minimizing its velocity in the image plane. Considering both perception and action objectives for motion planning and control is challenging due to the possible conflicts arising from their respective requirements. For example, for a quadrotor to track a reference trajectory, it needs to rotate to align its thrust with the direction of the desired acceleration. However, the perception objective might require to minimize such rotation to maximize the visibility of a point of interest. A model-based optimization framework, able to consider both perception and action objectives and couple them through the system dynamics, is therefore necessary. Our perception-aware model predictive control framework works in a receding-horizon fashion by iteratively solving a non-linear optimization problem.

It is capable of running in real-time, fully onboard our lightweight, small-scale quadrotor using a low-power ARM computer, together with a visual-inertial odometry pipeline. We validate our approach in experiments demonstrating (I) the contradiction between perception and action objectives, and (I) improved behavior in extremely challenging lighting conditions.

References

D. Falanga, P. Foehn, P. Lu, D. Scaramuzza

PAMPC: Perception-Aware Model Predictive Control for Quadrotors

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, 2018.

PDF

YouTube

Code

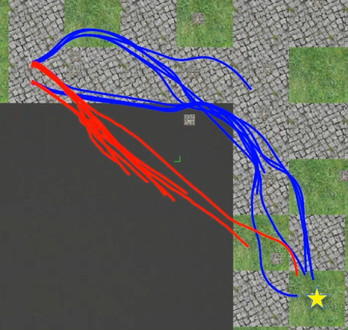

Perception-aware Receding Horizon Navigation for MAVs

To reach a given destination safely and accurately,

a micro aerial vehicle needs to be able to avoid obstacles

and minimize its state estimation uncertainty at the same

time. To achieve this goal, we propose a perception-aware

receding horizon approach. In our method, a single forward-

looking camera is used for state estimation and mapping.

Using the information from the monocular state estimation and

mapping system, we generate a library of candidate trajectories

and evaluate them in terms of perception quality, collision

probability, and distance to the goal. The best trajectory to

execute is then selected as the one that maximizes a reward

function based on these three metrics. To the best of our

knowledge, this is the first work that integrates active vision

within a receding horizon navigation framework for a goal

reaching task. We demonstrate by simulation and real-world

experiments on an actual quadrotor that our active approach

leads to improved state estimation accuracy in a goal-reaching

task when compared to a purely-reactive navigation system,

especially in difficult scenes (e.g., weak texture).

References

Z. Zhang, D. Scaramuzza

Perception-aware Receding Horizon Navigation for MAVs

IEEE International Conference on Robotics and Automation, 2018.

PDF

Video

ICRA18 Video Pitch

PPT

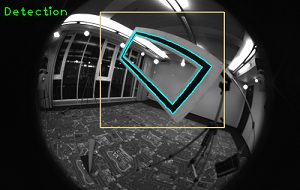

Aggressive Quadrotor Flight through Narrow Gaps with Onboard Sensing and Computing using Active Vision

In this paper, we address one of the main challenges

towards autonomous quadrotor flight in complex environments,

which is flight through narrow gaps. We present a

method that allows a quadrotor to autonomously and safely pass

through a narrow, inclined gap using only its onboard visual-inertial

sensors and computer. Previous works have addressed

quadrotor flight through gaps using external motion-capture

systems for state estimation. Instead, we estimate the state

by fusing gap detection from a single onboard camera with

an IMU. Our method generates a trajectory that considers

geometric, dynamic, and perception constraints: during the

approach maneuver, the quadrotor always faces the gap to allow

state estimation, while respecting the vehicle dynamics; during

the traverse through the gap, the distance of the quadrotor

to the edges of the gap is maximized. Furthermore, we replan

the trajectory during its execution to cope with the varying

uncertainty of the state estimate. We successfully evaluate and

demonstrate the proposed approach in many real experiments,

achieving a success rate of 80% and gap orientations up to

45 degrees. To the best of our knowledge, this is the first work that

addresses and successfully reports aggressive flight through

narrow gaps using only onboard sensing and computing.

References

D. Falanga, E. Mueggler, M. Faessler, D. Scaramuzza

Aggressive Quadrotor Flight through Narrow Gaps with Onboard Sensing and Computing

IEEE International Conference on Robotics and Automation, accepted, 2017.

PDF Arxiv

YouTube

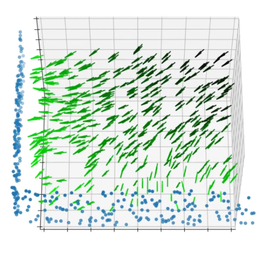

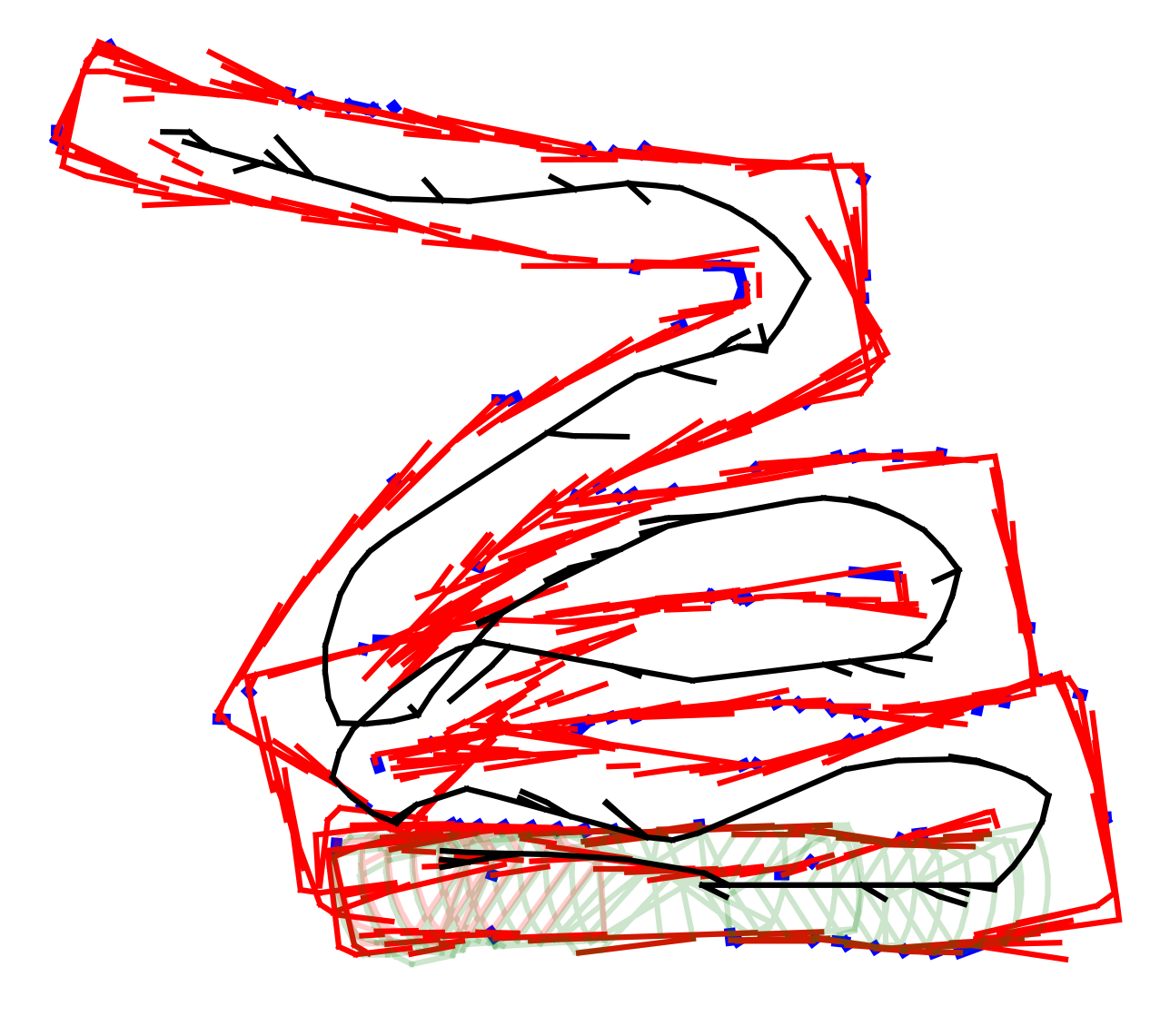

Active Autonomous Aerial Exploration for Ground Robot Path Planning

We address the problem of planning a path for a

ground robot through unknown terrain, using observations from

a flying robot. In search and rescue missions, which are our target

scenarios, the time from arrival at the disaster site to the delivery

of aid is critically important. Previous works required exhaustive

exploration before path planning, which is time-consuming but

eventually leads to an optimal path for the ground robot. Instead,

we propose active exploration of the environment, where the

flying robot chooses regions to map in a way that optimizes

the overall response time of the system, which is the combined

time for the air and ground robots to execute their missions.

In our approach, we estimate terrain classes throughout our

terrain map, and we also add elevation information in areas

where the active exploration algorithm has chosen to perform

3D reconstruction. This terrain information is used to estimate

feasible and efficient paths for the ground robot. By exploring

the environment actively, we achieve superior response times

compared to both exhaustive and greedy exploration strategies.

We demonstrate the performance and capabilities of the proposed

system in simulated and real-world outdoor experiments. To the

best of our knowledge, this is the first work to address ground

robot path planning using active aerial exploration.

References

J. Delmerico, E. Mueggler, J. Nitsch, D. Scaramuzza

Active Autonomous Aerial Exploration for Ground Robot Path Planning

IEEE Robotics and Automation Letters (RA-L), 2017.

PDF

YouTube

Perception-aware Path Planning

While most of the existing work on path planning focuses on reaching a goal as fast as possible, or with minimal effort, these approaches disregard the appearance of the environment and only consider the geometric structure.

Vision-controlled robots, however, need to leverage the photometric information in the scene to localize themselves and perform egomotion estimation.

In this work, we argue that motion planning for vision-controlled robots should be perception-aware in that the robot should also favor texture-rich areas to minimize the localization uncertainty during a goal-reaching task.

Thus, we describe how to optimally incorporate the photometric information (i.e., texture) of the scene, in addition to the the geometric information, to compute the uncertainty of vision-based localization during path planning.

References

G. Costante, J. Delmerico, M. Werlberger, P. Valigi, D. Scaramuzza

Exploiting Photometric Information for Planning under Uncertainty

Springer Tracts in Advanced Robotics (International Symposium on Robotic Research), 2017.

PDF

PDF of longer paper version (Technical report)

YouTube

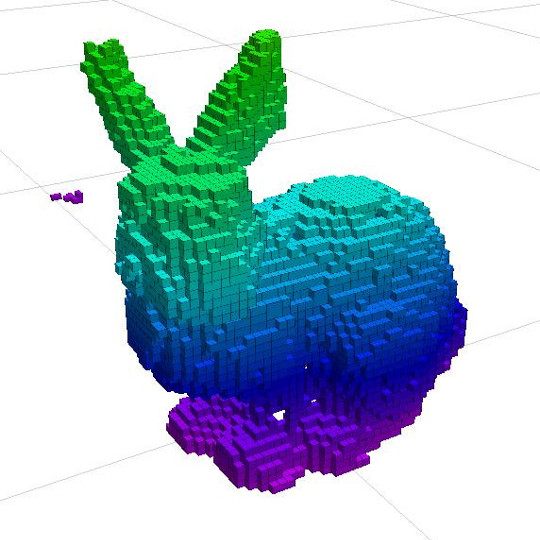

Information Gain Based Active Reconstruction

The Information Gain Based Active Reconstruction Framework is a

modular, robot-agnostic, software package for performing next-best-view planning for volumetric object reconstruction using a range sensor.

Our implementation can be easily adapted to any mobile robot equipped with any camera-based range sensor (e.g stereo camera, structured light sensor) to iteratively observe an object to generate a volumetric map and a point cloud model.

The algorithm allows the user to define the information gain metric for choosing the next best view, and many formulations for these metrics are evaluated and compared in our ICRA paper.

This framework is released open source as a ROS-compatible package for autonomous 3D reconstruction tasks.

Download the code from

GitHub.

References

S. Isler, R. Sabzevari, J. Delmerico, D. Scaramuzza

An Information Gain Formulation for Active Volumetric 3D Reconstruction

IEEE International Conference on Robotics and Automation (ICRA), Stockholm, 2016.

PDF

YouTube

Software

Active, Dense Reconstruction

The estimation of the depth uncertainty makes REMODE extremely attractive for motion planning and active-vision problems.

In this work, we investigate the following problem: Given the image of a scene, what is the trajectory that a robot-mounted camera should follow to allow optimal dense 3D reconstruction?

The solution we propose is based on maximizing the information gain over a set of candidate trajectories.

In order to estimate the information that we expect from a camera pose, we introduce a novel formulation of the measurement uncertainty that accounts for the scene appearance (i.e., texture in the reference view), the scene depth, and the vehicle pose.

We successfully demonstrate our approach in the case of realtime, monocular reconstruction from a small quadrotor and validate the effectiveness of our solution in both synthetic and real experiments.

This is the first work on active, monocular dense reconstruction, which chooses motion trajectories that minimize perceptual ambiguities inferred by the texture in the scene.

References

C. Forster, M. Pizzoli, D. Scaramuzza

Appearance-based Active, Monocular, Dense Reconstruction for Micro Aerial Vehicles

Robotics: Science and Systems, Berkely, 2014.

PDF