E-RAFT: Dense Optical Flow from Event Cameras

We are excited to share our 3DV oral paper!

Description

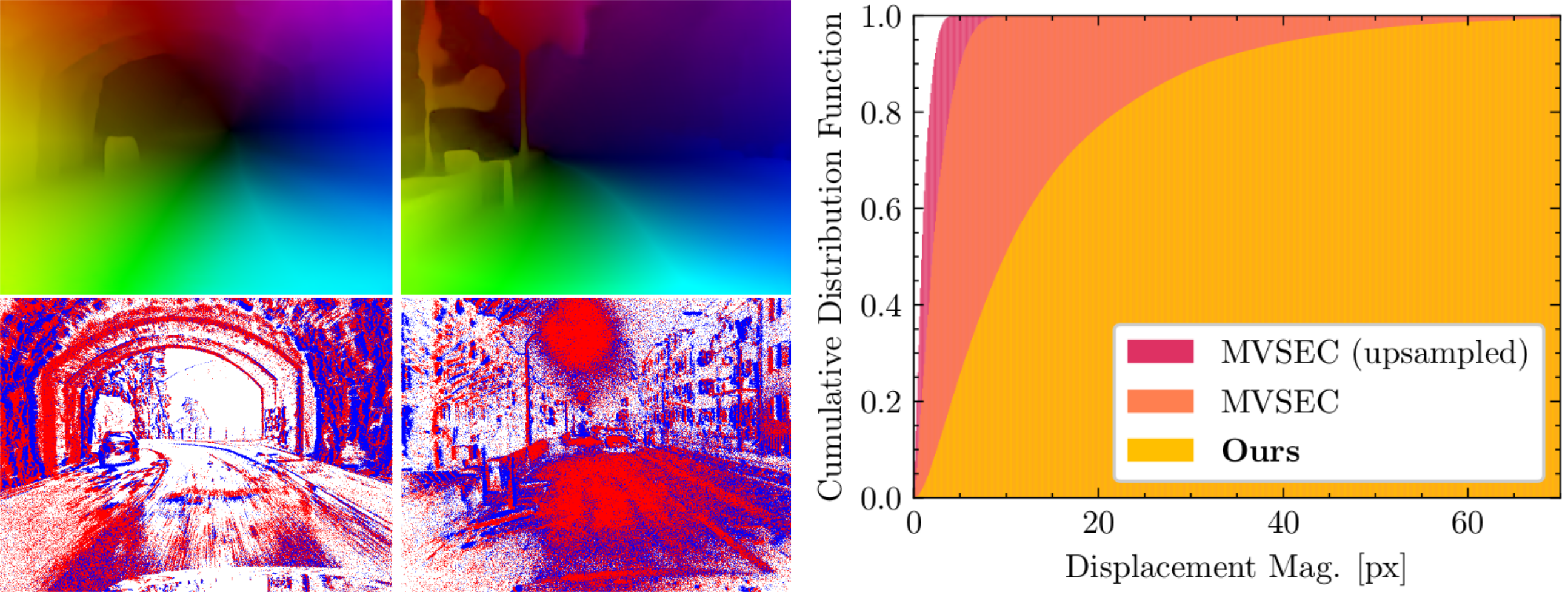

We propose to incorporate feature correlation and sequential processing into dense optical flow estimation from event cameras. Modern frame-based optical flow methods heavily rely on matching costs computed from feature correlation. In contrast, there exists no optical flow method for event cameras that explicitly computes matching costs. Instead, learning-based approaches using events usually resort to the U-Net architecture to estimate optical flow sparsely. Our key finding is that introducing correlation features significantly improves results compared to previous methods that solely rely on convolution layers. Compared to the state-of-the-art, our proposed approach computes dense optical flow and reduces the end-point error by 23% on MVSEC. Furthermore, we show that all existing optical flow methods developed so far for event cameras have been evaluated on datasets with very small displacement fields with a maximum flow magnitude of 10 pixels. We introduce a new real-world dataset that exhibits displacement fields with magnitudes up to 210 pixels and 3 times higher camera resolution based on this observation. Our proposed approach reduces the end-point error on this dataset by 66%.Publication

If you use this work in your research, please cite the following paper:

E-RAFT: Dense Optical Flow from Event Cameras

International Conference on 3D Vision (3DV), 2021.

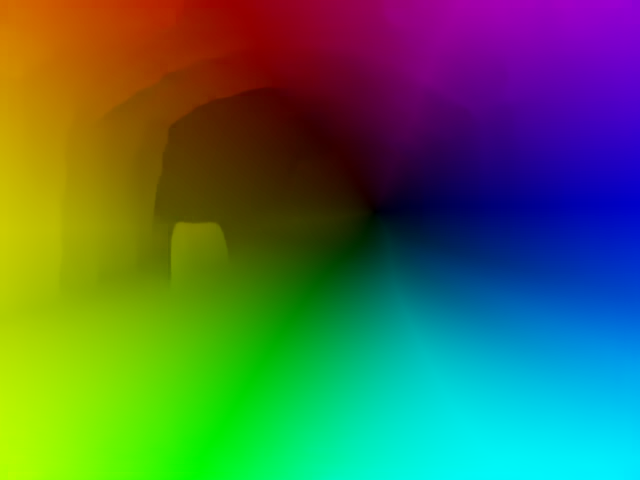

E-RAFT Overview

The approach builds on the idea of combining feature matching and black-box feature extraction with a CNN for optical flow prediction for event cameras:- We build two event representations from a sequence of events

- The network extracts correlation features from both representations

- At the same time, the second representation is directly fed through a CNN to an update network (GRU unit)

- The network iteratively matches correlation features and updates the optical flow prediction

For more details, please consult the paper.

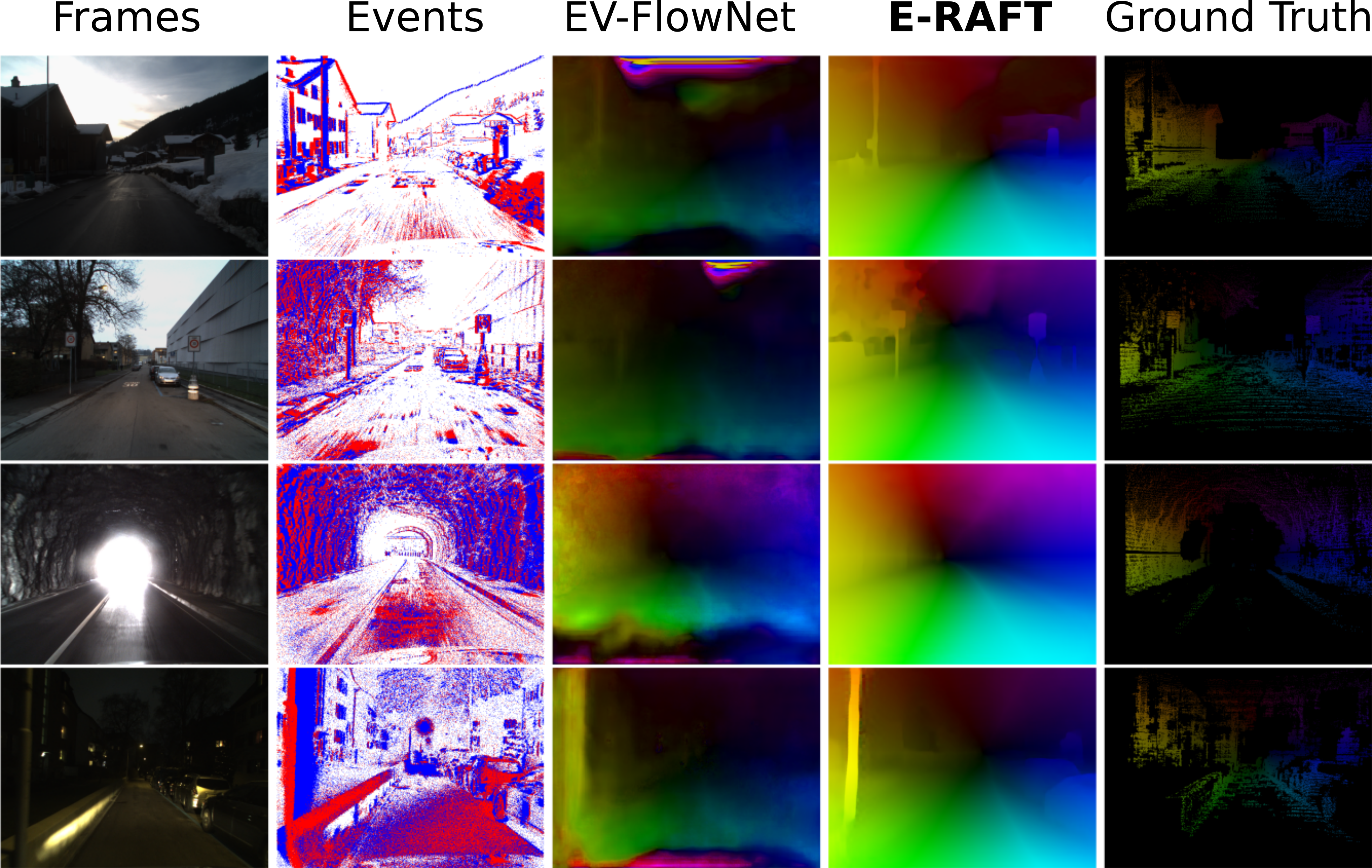

Results

Frames are for visualization purposes only, they are not used by the methods.

DSEC-Flow Dataset

We extend the DSEC dataset with high-quality optical flow ground truth maps. DSEC-Flow contains:- high-quality, accurately aligned data

- approximately 10k optical flow maps

- at VGA resolution in 24 sequences

- at day and night with challenging HDR scenes

- optical displacement magnitudes well beyond prior art (MVSEC)

Automatic Evaluation

Our evaluation is automated and anyone can submit their own predictions to the public benchmark.The main metric used for the ranking is the end-point-error (EPE) of the optical flow on the test set of DSEC-Flow.

We also provide the following error metrics:

- NPE: 1-pixel-error, the percentage of ground truth pixels with optical flow magnitude error larger than N. N is either 1, 2 or 3.

- EPE: Endpoint error. The average of the L2-Norm of the optical flow error.

- AE: Angular error.